The Sequential Network Request Pattern (and why you should avoid it!)

The Sequential Network Request pattern is a web performance anti-pattern, and it's unfortunately common in production web applications.

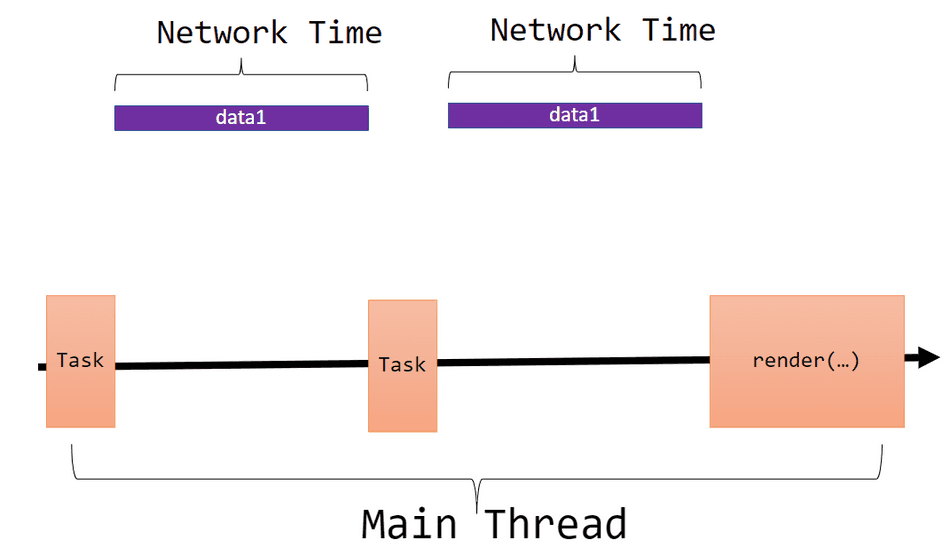

Conceptually, it works like this:

// Fetch data1

const data1 = await fetch('/api/data1');

// Fetch data2 sequentially after data1

const data2 = await fetch(createRequest(data1));

// Present data2 on-screen

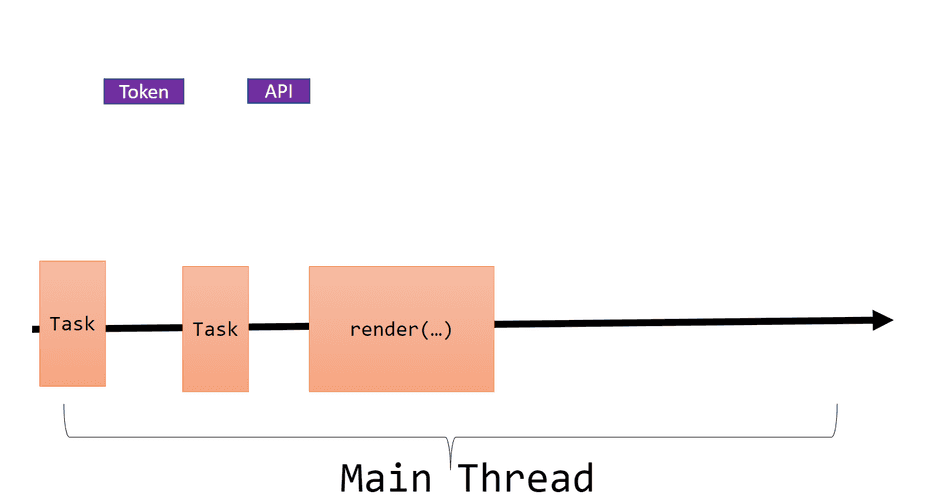

render(data2);In this example, data2 cannot begin request dispatch request until data1 is fully downloaded and available

on the thread. Only when data2 has been fully downloaded can the application present the UX on-screen, via render(...).

Our code above would be represented in the the following execution diagram:

Note: I use the terms Sequential, Serial, and Pipelined Network Request Pattern interchangeably.

While serially dispatching network requests and the associated performance regression is not limited to client web applications, I'll be discussing the pattern from the perspective of a client-side web application.

Real Examples

The Sequential Network Request Pattern isn't always as obvious as the example above. Consider some of these more subtle examples that I've seen in production web applications.

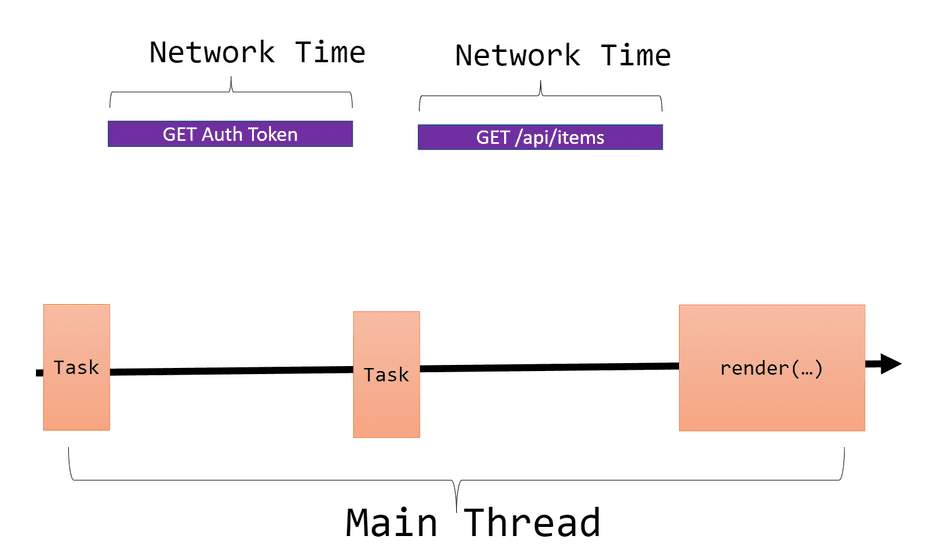

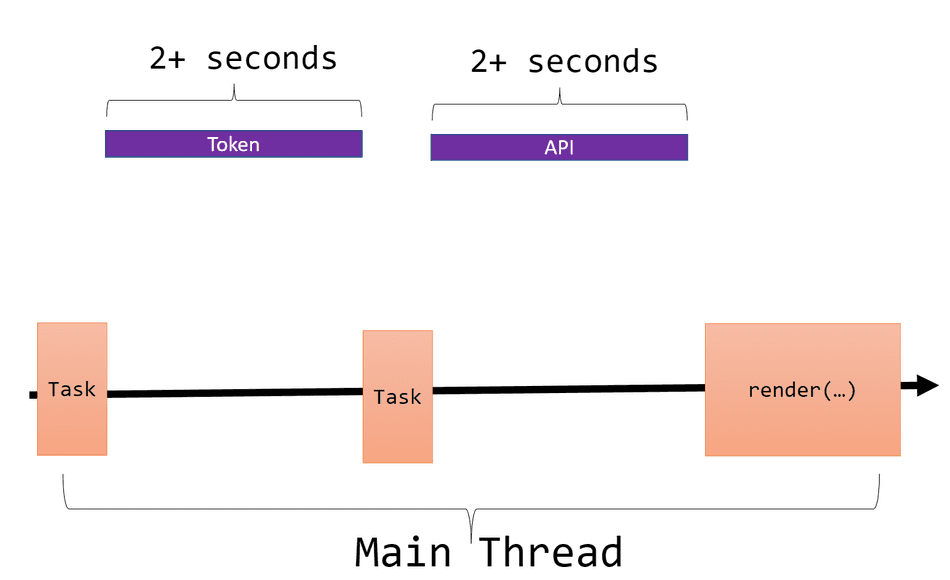

Example 1: Authorization tokens

Before a web application can access an authorized API, it often needs to acquire an access token:

// Request 1: Acquire authorization token

const token = await getToken();

// Request 2: Use token to access the authorized API

const response = await fetch({

url: 'https://api.my-service.com/api/items',

headers: {

'Authorization': `Bearer ${token}`

}

});

const data = await response.json();

// Render UX with data

render(data);This manifests as the following sequential network pattern:

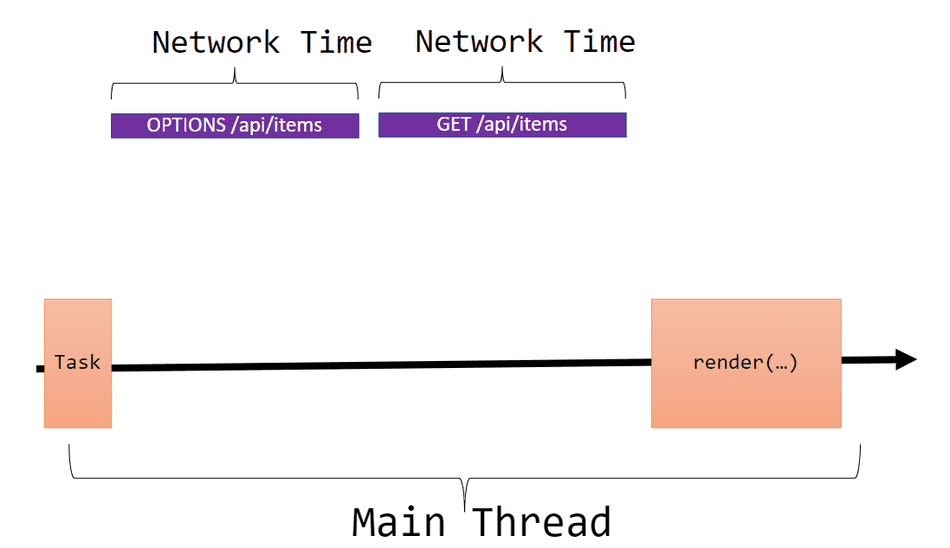

Example 2: CORS Preflight Requests

Web applications often must access remote resources on servers of a different origin than the one they are hosted in.

For example, the following code hosted on https://www.example.com would incur a CORS Preflight OPTIONS request:

const data = fetch({

url: 'https://api.my-service.com/api/items',

headers: {

'X-Session-Key': 'MyKey'

}

});This manifests as the following sequential network pattern:

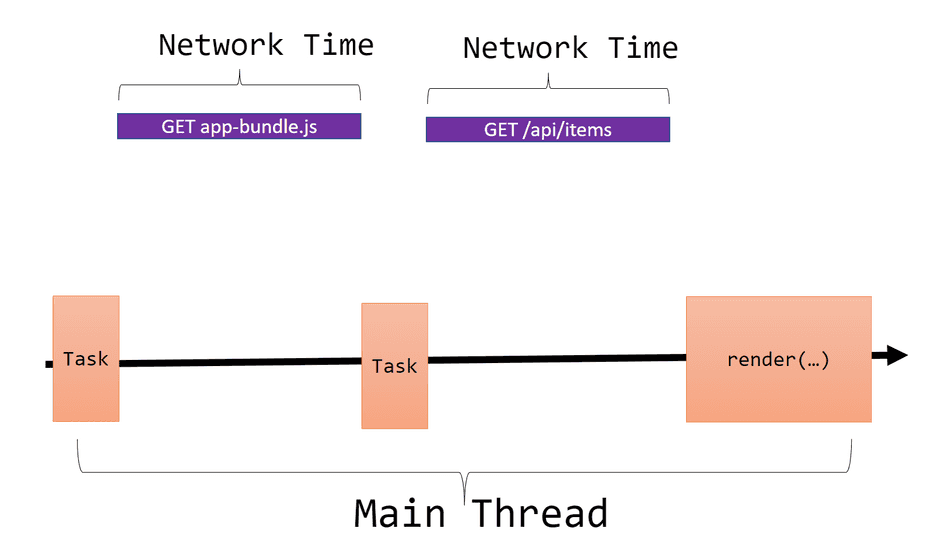

Example 3: Fetch Bundles, then Data

Web applications often rely on client JavaScript to initiate data acquisition flows, like acquiring JSON API data to drive a UX.

Consider the following example:

<!-- index.html -->

<script src="https://my-cdn.com/app-bundle.js" type="text/javascript" />// app-bundle.js

const data = fetch({

url: 'https://api.my-service.com/api/items'

});

render(data);This manifests as the following sequential network pattern:

Notably, the API data fetching does initiate until after the app-bundle.js has been parsed,

compiled, and executed.

The Problem

Designing APIs or call patterns that require a sequential "back and forth" between a client and one or more servers leads to network-bound performance bottlenecks.

For user-critical scenarios, any network-bound dependency should be carefully considered with extreme scrutiny.

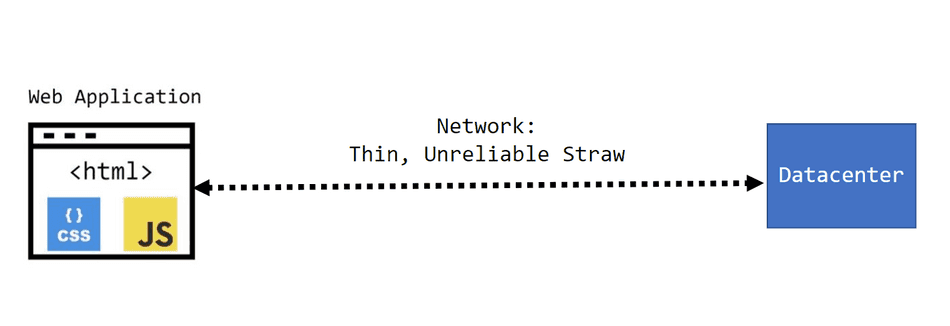

A Framework for Considering the Network

The framework I recommend using when considering network requests relies on considering the connection between the client and any remote datacenter as a thin, unreliable straw:

This framework encourages minimal sequential network requests in order to minimize time spent transferring data across this thin, unreliable straw.

Local vs. Real User

While connection speeds may not manifest as a bottleneck in your local testing, consider throttling your connection speed to better empathize with users on a slow or unreliable connection.

your users may be seeing observing latency that is significantly slower:

To capture this information, make sure you utilize Resource Timings and integrate into your telemetry systems.

Sequential Chaining Anti-pattern

If your critical user scenarios require sequential reads through this thin, unreliable straw, you are naturally incurring additional latency.

For each sequential network dependency in your critical path, your users are bearing the full round-trip cost across additively in your critical path.

Consider the following sequential chain:

- A user downloads the

index.htmlpage - A user then downloads

app-bundle.js - A user then tries to fetch remote JSON API data from a service on another origin. This requires a CORS OPTIONS Preflight request.

- Finally, once the CORS OPTIONS Preflight succeeds, a user may finally call the API for JSON Data, and the UX is presented once it's received

Each of these steps adds a finite amount of network transfer time to the critical path. In this case, the UX can finally be presented after 4 sequential network round trips, with each step adding to the network bottleneck.

Mitigation

It's not always possible to fully eliminate all sequential network requests, but there are certainly strategies one can use to help mitigate them as much as possible!

Some techniques include caching network dependencies for repeat visits, leveraging datacenter locality for bulk operations, utilizing Point of Presence Proxies to enhance connection reliability, parallelizing network requests, and bypassing CORS for cross-origin network requests.

That's all for this tip! Thanks for reading! Discover more similar tips matching Network.